Nvidia Corp., the world’s most valuable chipmaker, is updating its H100 artificial intelligence processor, adding more capabilities to a product that has fueled its dominance in the AI computing market.

The new model, called the H200, will get the ability to use high-bandwidth memory, or HBM3e, allowing it to better cope with the large data sets needed for developing and implementing AI, Nvidia said Monday. Amazon.com Inc.’s AWS, Alphabet Inc.’s Google Cloud and Oracle Corp.’s Cloud Infrastructure have all committed to using the new chip starting next year.

The current version of the Nvidia processor — known as an AI accelerator — is already in famously high demand. It’s a prized commodity among technology heavyweights like Larry Ellison and Elon Musk, who boast about their ability to get their hands on the chip. But the product is facing more competition: Advanced Micro Devices Inc. is bringing its rival MI300 chip to market in the fourth quarter, and Intel Corp. claims that its Gaudi 2 model is faster than the H100.

With the new product, Nvidia is trying to keep up with the size of data sets used to create AI models and services, it said. Adding the enhanced memory capability will make the H200 much faster at bombarding software with data — a process that trains AI to perform tasks such as recognizing images and speech.

“When you look at what’s happening in the market, model sizes are rapidly expanding,” said Dion Harris, who oversees Nvidia’s data center products. “It’s another example of us continuing to swiftly introduce the latest and greatest technology.”

Large computer makers and cloud service providers are expected to start using the H200 in the second quarter of 2024.

Nvidia doesn’t usually refresh its data-center processors, “so this represents further evidence of Nvidia accelerating their product cadence in response to AI market growth and performance requirements, which further expands their competitive moat,” Wolfe Research Chris Caso wrote in a note to clients. The company may be able to demand higher prices given the performance increase the new version offers, Caso said.

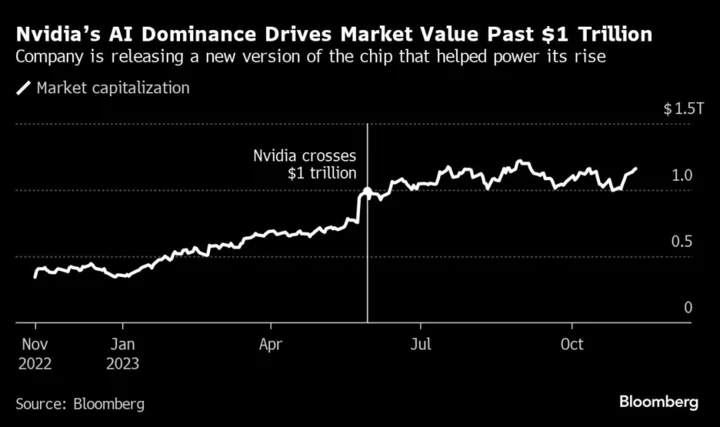

Nvidia shares gained as much as 1.5% in New York on Monday, making it one of only two large semiconductor stocks to rise. So far this year Nvidia is up more than 200%, making it by far the best performer on the Philadelphia Stock Exchange Semiconductor Index.

Read More: What’s the H100, the Chip Driving Generative AI?

Nvidia got its start making graphics cards for gamers, but its powerful processors have now won a following among data center operators. That division has gone from being a side business to the company’s biggest moneymaker in less than five years.

Nvidia’s graphics chips helped pioneer an approach called parallel computing, where a massive number of relatively simple calculations are handled at the same time. That’s allowed it to win major orders from data center companies, at the expense of traditional processors supplied by Intel.

The growth helped turn Nvidia into the poster child for AI computing earlier this year — and sent its market valuation soaring. The Santa Clara, California-based company became the first chipmaker to be worth $1 trillion, eclipsing the likes of Intel.

Read More: Nvidia Warns of Product Snags From Tightening US Chip Rules

Still, it’s faced challenges this year, including a crackdown on the sale of AI accelerators to China. The Biden administration has sought to limit the flow of advanced technology to that country, hurting Nvidia’s sales in the world’s biggest market for chips.

The rules barred the H100 and other processors from China, but Nvidia has been developing new AI chips for the market, according to a report from local media last week.

Nvidia will give investors a clearer picture of the situation next week. It’s slated to report earnings on Nov. 21.

(Updates with analyst comment, share move)